Is This App Safe? How to Spot AI-Built Software Before You Hand Over Your Data

A new app appears. The landing page is clean, the branding looks sharp, the signup flow is smooth. You enter your email, your name, maybe your phone number, maybe your card details. You get a welcome email. Everything feels normal.

Underneath, that app design and build might have been generated by AI in a weekend, deployed without a security review, and storing your data in a database that anyone on the internet can query.

This isn’t hypothetical. In 2025, around 13,000 people had their personal information exposed through apps built on a single AI app-building platform; most of them had no idea the services they trusted were AI-generated, let alone insecure. The exposed data included names, emails, addresses, payment records, and in some cases other API keys that opened the door to further compromise.

The uncomfortable reality is that the burden of figuring out whether an app is safe has quietly shifted onto end users. There’s no badge, no disclosure rule, no required security baseline before a service can start collecting your data.

So here’s a practical guide to spotting the warning signs and protecting yourself.

Why End Users Are Now in the Firing Line

How to Determine if an App is Safe

Before AI tools made app and software development accessible to anyone, the path from “idea” to “app collecting your personal data” went through professional engineers, code reviews, and at least some baseline security thinking. That wall is mostly gone.

Today, anyone with a prompt and a credit card can:

- generate a fully working web app in an afternoon

- connect it to a database

- collect signups

- charge for a subscription

- start storing real user data

None of those steps require the person to understand authentication, access control, encryption, or how to keep API keys out of public code. And the resulting app can look indistinguishable from one built by a serious team.

The hard part for users is that the surface gives almost nothing away.

Signals That an App Might Be AI-Built and Under-Secured

No single signal is proof. But when several stack up, it’s worth slowing down before signing up.

The Domain and Hosting

- Hosted on a builder subdomain. URLs ending in

.lovable.app,.vercel.app,.netlify.app,.replit.app,.bolt.new, or similar suggest the app may be early-stage or AI-built. Not automatically insecure — plenty of legitimate apps run on these platforms — but worth treating with extra caution before handing over real data. - Very new domain. A WHOIS lookup (free at sites like

who.is) shows when the domain was registered. A site asking for payment details on a domain registered three weeks ago deserves more scrutiny than one with a five-year history. - No clear company behind the product. No registered business name, no physical address, no team page, no LinkedIn presence for the founders. Real software usually has a paper trail.

The Branding and Copy

- Generic AI-flavoured naming. Names like “[Adjective][Noun].ai” or “[Verb]ly” combined with stock-feeling marketing copy. Not damning on its own, but a pattern.

- Hero images that look AI-generated. Slightly off hands, oddly perfect lighting, faces with the smooth uncanny look of generated portraits.

- Feature lists that read like a ChatGPT response. Three-bullet sections, identical sentence structures, every feature described in the same upbeat tone. Real product copy usually has more variation.

The Auth and Account Flow

- No two-factor authentication option. Any serious app handling personal data, payments, or business information should offer 2FA. Its absence is meaningful.

- Broken or weird login flows. Forgot-password emails that never arrive, confirmation emails from random Gmail addresses, signup forms that accept obviously invalid input. These often indicate broken auth design underneath.

- Asks for far more information than the product needs. A note-taking app asking for your date of birth and phone number on signup is a flag. The data is being collected because it can be, not because it has to be.

The Trust Signals That Should Be There

- No published privacy policy, or a clearly templated one. Look for specifics: what systems store your data, where they’re located, how long it’s kept, who it’s shared with. Generic templates with placeholder text suggest nobody thought hard about this.

- No security or trust page. Established apps usually have something — even a basic page covering encryption, data handling, and how breaches are reported.

- No working customer support channel. A support email that doesn’t reply, no live chat, no ticket system. If something goes wrong with your account, who do you contact?

The Practical Defence: Don’t Trust Apps With More Than They Need

Even with the best signal-spotting, you can’t always tell. So the realistic strategy is to assume any app might leak your data, and limit the damage if it does.

1. Use a Unique Password Every Time

This is the single highest-leverage habit a normal person can adopt. A password manager — Bitwarden, 1Password, Apple’s built-in Keychain, or your browser’s manager — generates a unique password per site and remembers it for you.

When (not if) one app gets breached, the leaked password is useless anywhere else.

2. Use Email Aliases

Services like Apple’s Hide My Email, Firefox Relay, SimpleLogin, or DuckDuckGo Email Protection let you create a different email address for every signup. They forward to your real inbox.

The benefit:

- If one alias starts getting spam or phishing, you know exactly which app leaked your address.

- You can shut off any alias instantly without affecting anything else.

- Attackers who buy leaked email lists can’t link your accounts together.

3. Use Virtual Card Numbers for Payments

If you’re entering payment details into something you don’t fully trust, virtual cards are your friend.

- Revolut, Wise, and most major banks now offer disposable or single-merchant card numbers.

- In the US, Privacy.com is popular for this.

- Apple Pay and Google Pay also tokenise your real card so the merchant never sees the actual number.

You can cap the spending limit, lock the card to one merchant, or kill it instantly if something looks off.

4. Be Selective About What You Upload

AI-built apps often store uploaded files in cloud storage buckets that are misconfigured to be publicly accessible. If you wouldn’t be comfortable with a document being viewable by anyone with a guessable URL, don’t upload it to a service you don’t trust.

This applies especially to:

- ID documents (passports, driver’s licences)

- Financial records

- Medical information

- Anything containing other people’s personal details

5. Watch for Targeted Phishing After Signup

The clearest sign you’ve signed up for a leaky app is a phishing email that’s too well-informed. If you start getting messages that reference:

- a real order number

- the actual amount you spent

- a specific feature of the service

- your real name combined with details only that service knew

…assume the service has been breached. Change your password there, rotate any payment card you used, and treat any “urgent action required” emails from that service as suspect for the next few months.

6. Check Have I Been Pwned Periodically

haveibeenpwned.com is a free service run by security researcher Troy Hunt. It tells you which known data breaches have included your email address. You can also subscribe to be notified if your address appears in a future breach.

It’s the closest thing to a smoke alarm for your digital life.

7. For Anything Financial or Health-Related, Prefer Boring Established Services

This advice sounds dull, but it’s statistically right. A ten-year-old company with an ugly website is almost always safer than a beautifully-designed app that launched last month — because the older company has had more time, more incidents, and more reasons to take security seriously.

For banking, insurance, healthcare, tax, or anything where a breach would seriously harm you, “exciting and new” is a feature you don’t actually want.

A Reasonable Mental Test Before You Sign Up

When you’re about to hand over your details to a service you don’t know well, run this quick check:

- Could I find anyone responsible? Is there a real company behind it, with a name and an address?

- Have I heard of this from a trustworthy source, or just an ad?

- Does it offer 2FA?

- Is it asking for more information than it actually needs?

- If this app leaked everything I’m about to give it tomorrow, what’s my exposure?

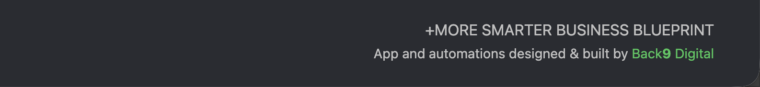

- Is the software or app built by a reputable company? Check the footer (right at the bottom) and see if it states who build it… See image below

If you can’t answer those comfortably, you don’t need to refuse, you just need to limit what you give. Use an email alias, a unique password, a virtual card, and the minimum information required.

What Should Change

End users shouldn’t have to be amateur security researchers to safely sign up for a service. The current state of things — where an AI-built app with no security review can collect your name, email, address, and payment details, and the only signal you get is a slightly-too-polished landing page — isn’t sustainable.

Eventually, this will likely shift through some combination of:

- regulation requiring disclosure of AI-generated software handling personal data

- platform-level security defaults that make basic mistakes harder to ship

- consumer trust marks for services that meet a security baseline

- insurance and liability pressure on businesses that deploy unreviewed AI-generated code

Until any of that arrives, the practical answer is the same as it’s always been with new technology: trust slowly, give up the minimum, and assume that what gets collected will eventually leak.

The good news is that the basic defences, unique passwords, email aliases, virtual cards, and a healthy scepticism — protect you against most of the damage even when an app does turn out to be insecure.

The bad news is that nobody else is going to do this for you.

Frequently Asked Questions

How can I tell if an app was built using AI?

There’s no definitive way, but signals include: hosting on AI builder subdomains (.lovable.app, .vercel.app, etc.), very new domains, generic AI-flavoured branding, AI-generated hero images, and feature lists that read like ChatGPT output. Most importantly: lack of a real company behind the product, no team or contact details, and no published security or privacy practices.

Is it dangerous to sign up for AI-built apps?

Not automatically; many AI-built apps are perfectly safe. The risk is that AI tools make it possible to launch software without the security review that used to happen by default. That means a higher proportion of AI-built apps ship with vulnerabilities like exposed databases, missing access controls, or leaked API keys. The damage usually shows up as data breaches and targeted phishing.

What information is most risky to give to an unknown app?

Anything that’s hard to change or rotate: government ID numbers, full date of birth, home address, real card details (use virtual cards instead), and unique passwords. Email and phone are also valuable to attackers but easier to mitigate using aliases.

How do I know if my data has already been leaked?

Check haveibeenpwned.com — it lists known breaches your email has appeared in. Also watch for unusually well-informed phishing emails referencing specific accounts or transactions. That’s often the first real-world sign that a service you used has been compromised.

What should I do if I think an app I signed up for has leaked my data?

Change the password on that service immediately. Change it on any other service where you reused that password (and switch to a password manager so you never reuse again). If you used a payment card, rotate it or use a virtual card going forward. Watch for phishing emails for several months. If serious data was exposed (ID, financial details), consider a credit monitoring service.